Why Governance Architecture Must Precede Lean (post-1988) Methods and APS-Centric Scheduling Models

1. Executive Summary

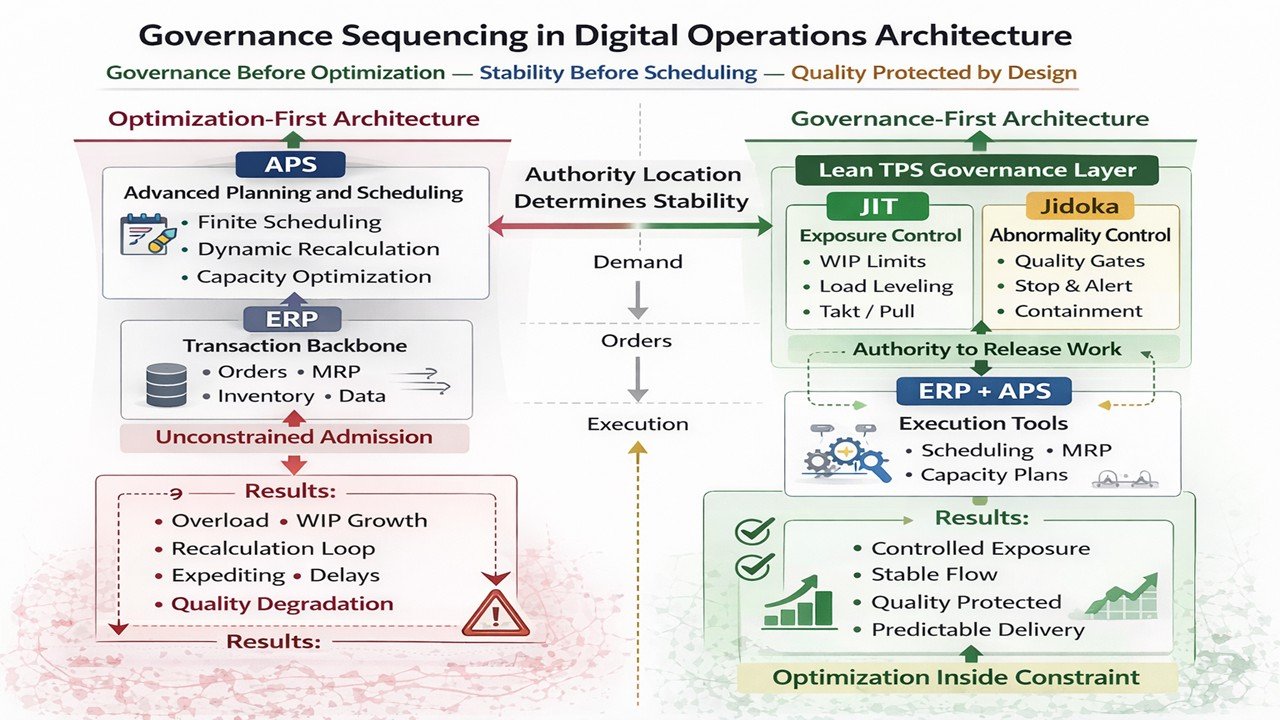

Modern digital operations frequently position optimization above governance. Advanced Planning and Scheduling systems perform finite scheduling, dynamic recalculation, and capacity optimization within Enterprise Resource Planning environments. These tools improve sequencing precision. They do not inherently govern workload exposure.

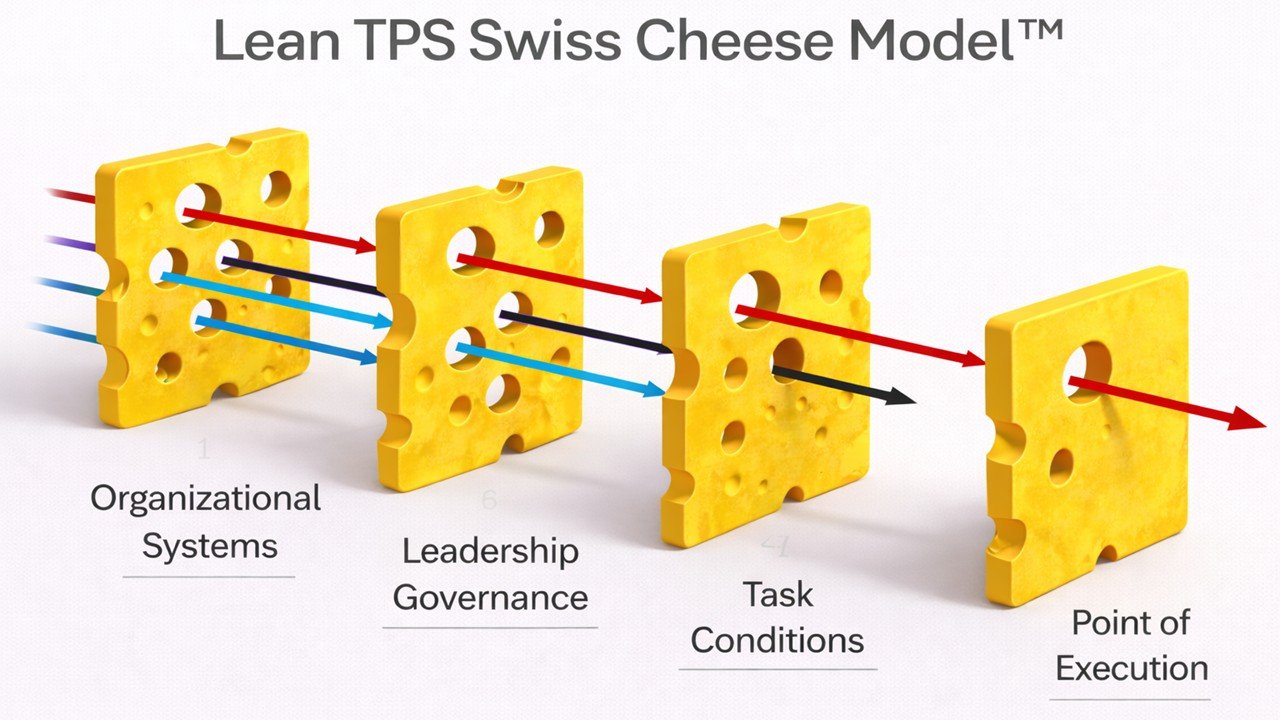

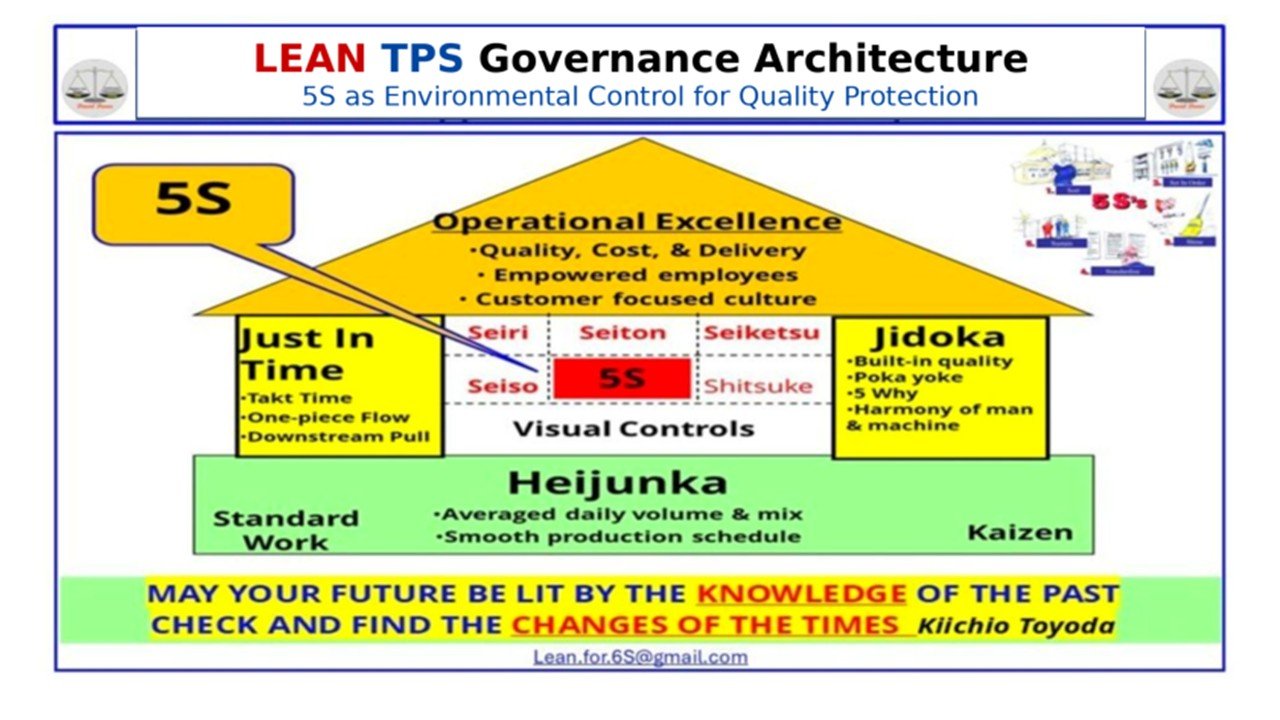

Lean TPS defines governance at a different architectural layer. Governance precedes optimization. It determines whether work is permitted to enter the system, under what quantitative limits, and under what conditions abnormality requires interruption. Quality is protected through structural constraint before performance is optimized through calculation.

Two control mechanisms define this governance architecture.

Just In Time governs quantity exposure. Work-in-process is intentionally constrained through defined release limits, load leveling, and pull discipline. Stability emerges when workload entering the system does not exceed demonstrated sustainable capacity.

Jidoka governs abnormality exposure. Deviation from defined normal conditions requires interruption and correction. Detection without enforced stop does not constitute control. Quality is protected at the source through structural obligation rather than discretionary response.

ERP systems function as transactional backbones. They manage orders, material requirements, inventory, and financial records. APS systems operate as optimization layers within or alongside ERP. They improve sequencing logic and capacity utilization. Neither ERP nor APS inherently defines enforceable exposure limits unless governance is architected explicitly above them.

When digital architecture places APS optimization logic before governance constraint, admission becomes conditionally bounded rather than structurally capped. Recalculation compensates for overload after it has entered the system. Under volatility, this sequencing amplifies instability. As utilization approaches capacity, waiting time increases nonlinearly. Variability propagates. Expediting behavior rises. Schedule churn intensifies.

Quality degradation begins before visible failure. Sustained overload increases defect probability, rework, and lead time variance. Exposure expands while optimization metrics may continue to appear locally efficient. Stability erodes even when computational performance improves.

A governance-first digital model reverses the sequence. Lean TPS governance defines exposure limits and abnormality response before ERP executes transactions and APS optimizes schedules. Optimization operates within enforced boundaries. Workload remains controlled. Variability is surfaced early rather than amplified. Quality is protected as a governing condition rather than evaluated solely as an outcome.

The structural question for modern digital operations is therefore precise:

Where does authority to constrain workload reside in your architecture, and what prevents optimization logic from overriding governance under pressure?

1.1 Governance as Foundational Boundary

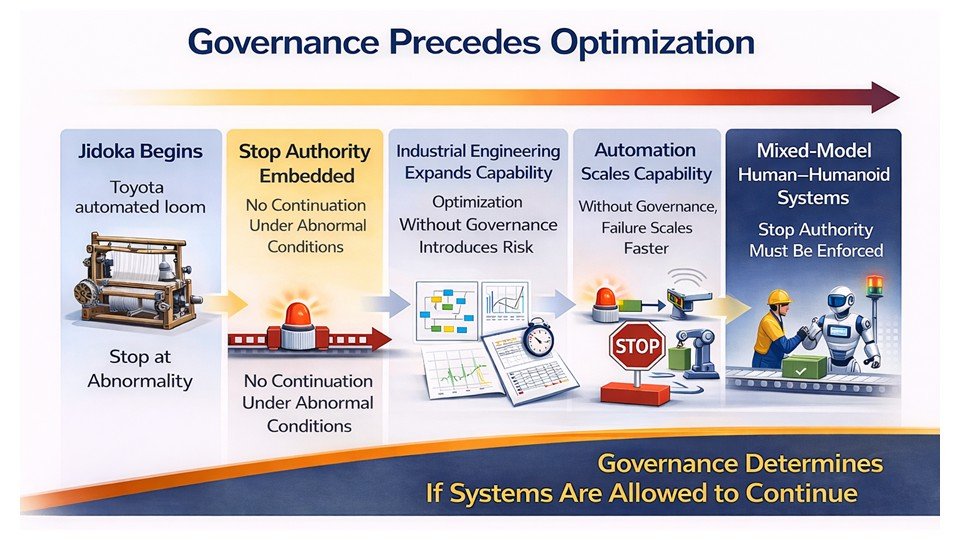

Engineering analysis increases system capability. Governance determines what is operationally permitted once the system is under load. The distinction is structural. It is not philosophical.

Optimization disciplines, whether Industrial Engineering, Lean Six Sigma, or digital scheduling models, improve performance through measurement, modeling, and recalculation. They increase visibility into capacity, variation, routing flexibility, and sequencing alternatives. These contributions are legitimate and technically rigorous.

Governance operates at a different layer. Governance defines non-negotiable boundaries. It determines how much work may enter the system, what conditions constitute normal operation, and what actions are required when those conditions are violated. Governance does not describe how to optimize performance. Governance determines whether performance may proceed.

When operating conditions deteriorate under volatility, the distinction becomes decisive. If the system relies primarily on recalculation, reprioritization, and managerial discretion, optimization governs behavior. If the system constrains exposure structurally and enforces interruption when abnormality appears, governance governs behavior.

This boundary precedes any discussion of job shop versus assembly environments, manual scheduling versus digital scheduling, or push versus pull terminology. The question is not whether optimization improves capability. The question is whether optimization operates inside defined limits, or whether it defines the limits.

Lean TPS was constructed on the premise that governance must precede optimization. Analytical capability defines what is technically possible. Governance defines what is permitted under pressure.

The remainder of this paper examines how that ordering applies within modern Enterprise Resource Planning (ERP) and Advanced Planning and Scheduling (APS) architectures.

1.2 Where Structural Divergence Occurs

Structural divergence becomes visible when a system begins operating under volatility rather than when it is modeled under normal conditions.

Analytical disciplines define how a system should perform when assumptions hold. Capacity is measured. Routing logic is evaluated. Finite schedules are constructed. Preventive maintenance intervals are defined. Standard work content is calculated. These outputs describe intended behavior under stable conditions.

The decisive question emerges when conditions deteriorate.

When due-date pressure intensifies, when uptime fluctuates, when variability increases, and when management demands throughput recovery, who governs continuation?

In many optimization-centered environments, engineered standards remain technically sound but operationally discretionary. Cycle times are defined yet compressed under pressure. Preventive tasks are scheduled yet deferred when delivery risk escalates. Finite capacity limits are calculated yet additional work is admitted to satisfy commitments. Abnormal conditions are recorded while production continues.

Under these circumstances, optimization remains advisory. Its influence depends on management discipline rather than structural enforcement.

Lean TPS was constructed to eliminate this discretionary gap between design and behavior.

Normal conditions are defined explicitly through Standardized Work. Sequence, timing, and in-process inventory are specified as enforceable baselines. Exposure is limited structurally through controlled work-in-process boundaries. Load is stabilized within demonstrated capacity. Abnormality requires interruption through Jidoka. Continuation is not permitted without correction and confirmation.

These mechanisms precede optimization.

In a governance-first architecture, normal is defined before sequencing occurs. Exposure is capped before workload accumulates. Stop authority is embedded before delivery pressure intensifies. Optimization operates inside defined limits rather than defining them.

The difference is not analytical competence. The difference is embedded authority.

When continuation authority remains discretionary, standards are stretched under pressure. When continuation authority is structurally constrained, instability is surfaced early and leadership must resolve causes before flow resumes.

This divergence becomes critical in digital environments where recalculation can occur continuously. Without governance preceding optimization, analytical capability amplifies instability rather than absorbing it.

The next sections locate this divergence within modern ERP and APS architectures.

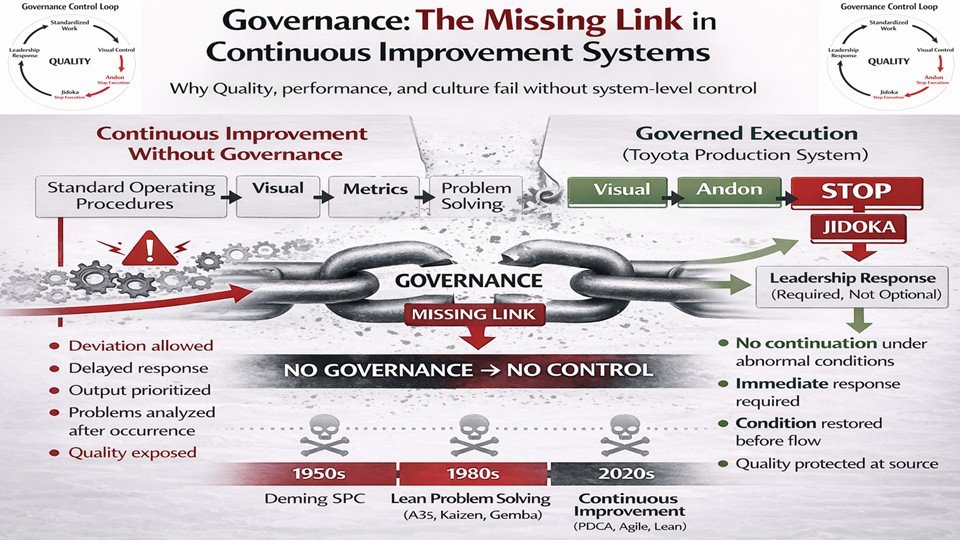

1.3 The Non-Negotiable Distinction

The distinction between governance-first architecture and optimization-first architecture can be defined precisely.

Defined normal establishes how work must be executed. Sequence, timing, and in-process inventory conditions are specified explicitly. Normal is not a target range or historical average. It is the enforceable reference condition required for stability. When normal is clearly defined, deviation becomes detectable. When normal is ambiguous, deviation blends into routine variation.

Exposure caps limit how much instability may enter the system at one time. Work in process is bounded. Active jobs are constrained. Queue length is intentionally restricted. The system does not attempt to buffer unlimited variability. It makes instability visible by limiting admission.

Stop authority prevents continuation under abnormal conditions. Detection alone does not alter system behavior. Information about deviation does not constitute control. When interruption authority is embedded, abnormality changes the operational state immediately. Production does not proceed while problems are documented for later resolution. Flow resumes only after defined conditions are restored.

Leadership obligation ensures that response remains within daily management responsibility. Abnormality cannot be deferred to future projects or discretionary review. Escalation requires engagement until stability is restored. Responsibility remains inside the operating system.

These elements constitute governance. They are not optimization techniques. Structural governance is defined by boundary conditions that cannot be relaxed through optimization parameter adjustment.

Without defined normal, deviation cannot be identified reliably. Without exposure caps, variability compounds as workload exceeds demonstrated capacity. Without stop authority, detection produces data but not control. Without leadership obligation, correction becomes discretionary.

Optimization disciplines can design each of these elements. They can measure sequence, model capacity, recommend limits, and propose escalation logic. What optimization alone cannot guarantee is enforcement when delivery pressure intensifies. Enforcement is a structural property, not a modeling outcome.

Lean TPS embeds enforcement into daily operation. Continuation requires compliance with defined conditions. Stability is not dependent on managerial restraint. It is defined architecturally.

This distinction governs the remainder of this paper.

The question is not whether scheduling algorithms can compute efficient release times. The question is whether the architecture defines who may release work, how much workload may be admitted concurrently, and what must occur before continuation when deviation appears.

Governance precedes optimization.

That ordering determines system behavior under volatility.

The following sections examine how this boundary applies within modern ERP and APS architectures.

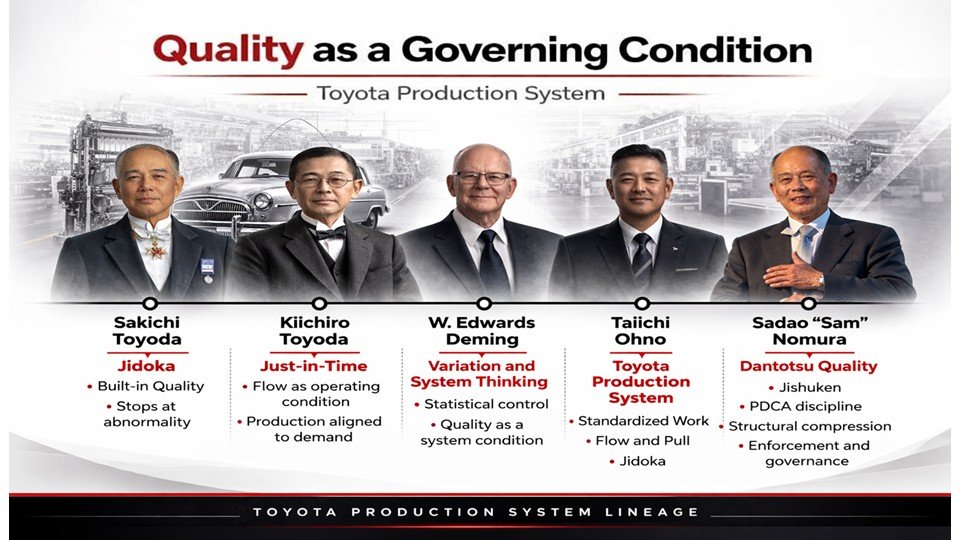

2. The Structural Inversion of Lean (post-1988)

2.1 Repositioning TPS as Governing Architecture

A second structural shift affects how digital optimization models are interpreted.

The Toyota Production System was designed as an operating architecture with enforced conditions. Defined normal, exposure caps, stop authority, and leadership obligation were not conceptual guidance. They were embedded requirements governing daily behavior.

Lean (post-1988) frameworks frequently reposition TPS as origin material rather than as governing reference. In this framing, TPS becomes historical source while Lean becomes the interpretive container.

This shift alters the evaluative boundary.

When TPS functions as governing reference, claims must be verified against operational conditions. Defined normal must be observable. Exposure caps must be present. Stop authority must interrupt continuation. Leadership obligation must be active. Governance is confirmed through enforcement.

When TPS is treated as precursor to a broader Lean framework, evaluation often shifts toward alignment with principles, maturity models, or transformation narratives. Verification becomes interpretive rather than structural. Practices may be described as Lean while operating without embedded exposure control or interruption authority.

This inversion matters because it influences how digital scheduling systems are assessed.

If Lean (post-1988) functions as interpretive umbrella, APS optimization tools may appear consistent with Lean because they improve efficiency or reduce visible waste. The question of governance sequencing may not arise, because the reference system that enforced governance-first ordering has been repositioned as historical context rather than architectural requirement.

Restoring TPS as governing reference re-establishes the correct boundary.

The relevant question is not whether digital optimization aligns with Lean language. The question is whether governance conditions are structurally embedded before optimization is applied.

The next sections evaluate ERP and APS architectures against that boundary.

2.2 Reclassification as Ideology

A further structural shift occurs when the Toyota Production System is described primarily as philosophy rather than as operating architecture.

Operating systems are verified through observable behavior under load. Ideologies are discussed and interpreted. They are evaluated through coherence and persuasion rather than through enforced conditions.

When TPS functions as operating architecture, evaluation is empirical. Defined normal must be present. Exposure caps must constrain workload. Stop authority must interrupt continuation. Leadership must respond before production resumes. These conditions are either embedded or they are not.

When TPS is reframed as ideology, evaluation becomes interpretive. Organizations assess alignment with principles such as continuous improvement, respect for people, or learning culture. Structural enforcement mechanisms become secondary to value alignment.

Reclassification does not reject TPS vocabulary. It alters its authority.

Standardized Work becomes a technique rather than a non-negotiable baseline. Stop logic becomes a commitment to quality rather than an interruption requirement. Leadership obligation becomes engagement philosophy rather than structural mandate.

Optionality enters at this point.

Governance conditions are binary. Work either stops under abnormality or it continues. Workload either remains capped or it expands. Leadership either is required to respond or response is discretionary. These are architectural conditions, not interpretive preferences.

When enforcement becomes discretionary, competing pressures influence decisions. Under volatility, temporary exceptions expand. Standards are stretched. Drift accumulates.

This reclassification matters for digital architecture. If TPS is treated as philosophy, replacing governance mechanisms with computational scheduling models appears reasonable. If TPS is understood as enforceable governance architecture, sequencing of authority must be examined before replacement is assumed.

The next sections evaluate ERP and APS architectures against that enforcement boundary.

2.3 Institutional Absorption

Structural inversion stabilizes when it becomes institutional.

When the Toyota Production System is absorbed into credentialing systems, academic curricula, and generalized improvement certifications, its status shifts from operating discipline to intellectual content.

At that point, TPS can be studied without being operated.

Individuals may demonstrate fluency in terminology, history, and conceptual structure while working inside systems that do not embed governance conditions. Standardized Work, pull, leveling, and continuous improvement become components within broader improvement hierarchies rather than enforceable architectural requirements.

Legitimacy shifts from operational verification to credentialed competence.

When evaluation depends on certification or conceptual alignment rather than on embedded enforcement, vocabulary can persist while governance erodes. Organizations may reference pull or flow while admitting work without structural caps. Scheduling models may reference leveling while sequencing inside unconstrained admission structures.

The language remains. The enforcement boundary does not.

This institutional shift influences how digital optimization models are assessed. If TPS is treated as one conceptual contributor within a generalized improvement framework, replacing governance mechanisms with computational scheduling logic appears reasonable. The absence of enforced exposure control may not be recognized as structural risk.

Restoring TPS as governance architecture re-establishes the evaluative boundary. The question becomes whether defined normal, exposure caps, interruption authority, and leadership obligation are embedded before digital optimization is applied.

The next section moves from interpretive framing to structural placement within ERP and APS architectures.

3. The Job Shop Argument Properly Framed

3.1 The APS-Centric Claim

Advocates of Advanced Planning and Scheduling–centric control models in high-mix, low-volume environments present a technically coherent argument.

They contend that traditional Toyota Production System mechanisms such as Heijunka and Kanban are structurally unsuitable for complex, order-driven production systems characterized by routing variability, unpredictable demand, diverse part numbers, and due-date-driven commitments. In machining, aerospace components, mold and die production, and engineer-to-order fabrication, individual orders may represent unique configurations. Routing sequences differ across jobs, setup times vary materially between operations, constraints shift depending on order composition, and demand patterns are irregular rather than repetitive.

Under these conditions, signal-based pull systems and leveled sequencing are described as impractical at the finished product level because stable repetition does not exist.

The proposed alternative is computational release control. Time-based release mechanisms calculate when a job should enter the shop floor using due dates, routing structures, operation times, and current system state. Modern APS systems dynamically evaluate load conditions, recalculate release timing as disruption occurs, and sequence work to minimize congestion and improve due-date performance. Controlled push models extend this logic by determining release through calculated timing rather than through explicit authorization signals, and by managing congestion through finite capacity scheduling rather than fixed exposure caps.

The central assertion is that in high-variety environments, algorithmic scheduling provides superior control of work-in-process compared to static signal-based mechanisms derived from repetitive assembly contexts.

This is a legitimate planning argument. It addresses routing diversity, finite capacity modeling, sequencing precision, and due-date performance in complex environments. It engages real operational constraints that cannot be dismissed.

However, it remains a planning-layer argument. It focuses on how work should be sequenced and timed after admission into the system. It does not inherently define who holds admission authority, how much workload may be admitted concurrently, or what structural limits constrain exposure under volatility.

That distinction frames the remainder of the analysis. The next section examines whether computational release timing substitutes for governance architecture or operates within architecture that must be defined independently of optimization logic.

3.2 The Stated Critique of TPS in High-Mix, Low-Volume Contexts

Within APS-centric framing, Toyota Production System mechanisms are frequently interpreted as scheduling tools suited primarily for repetitive assembly environments.

Heijunka is described as a demand-smoothing technique appropriate for stable finished goods volume and predictable mix. Kanban is characterized as infeasible in environments with numerous part numbers, routing variability, and irregular order patterns. Pull systems are framed as incompatible with due-date-driven release requirements because signal-based authorization is viewed as insufficient for complex, routing-diverse job shops.

From these premises, TPS is presented as structurally mismatched to high-variety production environments.

These critiques share a common assumption: that TPS is fundamentally a scheduling method developed for repetitive production contexts. This assumption misclassifies the architecture.

TPS was not constructed as a scheduling algorithm. It was constructed as a governance architecture that defines admission authority, limits exposure, embeds interruption rules, and assigns responsibility for response under abnormal conditions.

Heijunka, Kanban, and pull mechanisms are often evaluated as techniques for smoothing output or replenishing repetitive demand. Within TPS architecture, their function is structural rather than cosmetic. They act as constraints that regulate how much work may enter the system and under what authorization work may proceed.

If TPS is evaluated solely as a schedule generator, it will appear mismatched to volatile job shop conditions. High-mix environments do not present repetitive finished goods signals, and routing diversity complicates simplistic interpretations of leveling and replenishment.

If TPS is evaluated as governance architecture, the category shifts. The relevant question becomes whether high-mix environments require defined admission authority, bounded exposure, interruption rules, and leadership obligation under pressure. The comparison is no longer between assembly and job shop geometry; it is between alternative models of control.

When TPS is misclassified as scheduling logic, evaluation remains confined to the planning layer. When TPS is understood as governance architecture, evaluation shifts to the authority layer that determines continuation permission.

Clarifying this boundary is necessary before assessing whether APS-centric models are superior, complementary, or insufficient as substitutes. The following section isolates that authority distinction explicitly.

3.3 Clarifying the Category Boundary

Advanced Planning and Scheduling models operate within the planning layer of a production system. Their primary function is to optimize release timing and sequencing for work expected to flow through the system. They calculate when jobs should enter the shop floor based on due dates, routing structure, expected processing times, and current capacity status. They determine execution order to improve throughput, reduce lateness, and moderate work-in-process growth.

These models assume that work will be admitted and then seek to optimize its movement within that admitted set.

Lean TPS operates at a different architectural layer. It governs whether work is permitted to enter the system under defined conditions. Admission is not merely calculated; it is authorized. Authorization is conditional on exposure limits, defined normal work, and verified system stability.

These functions are not interchangeable. Optimization-first logic evaluates timing relative to projected system state and asks how performance can be improved through calculated release and sequencing. Governance-first logic evaluates boundary conditions and asks whether release is permitted at all under current structural constraints.

The distinction becomes decisive under volatility. When release authority is discretionary, admission expands under due-date pressure. Even when release timing is calculated carefully, cumulative discretionary admission increases total exposure. Work-in-process grows, utilization rises toward saturation, variability propagates, and lead times extend nonlinearly. Scheduling engines can resequence work intelligently, but they cannot remove structural overload once it has been admitted.

When exposure is capped and continuation requires compliance with defined normal conditions, volatility is surfaced immediately rather than absorbed silently. Admission stops when defined limits are reached. Abnormality interrupts continuation. Instability becomes visible before it compounds.

This distinction is not a debate between push and pull terminology, nor between computational sophistication and simplicity. Both architectures can employ advanced analytical tools. The decisive issue concerns the ordering of authority. When calculation precedes constraint, governance functions as advisory guidance subject to override. When constraint precedes calculation, optimization operates within enforced limits that define continuation permission.

That ordering determines whether volatility is absorbed structurally at the boundary or redistributed computationally after admission. The following section examines how overload propagates once admission authority is mis-sequenced.

4. Release Timing vs Admission Authority

4.1 What APS Optimizes

Advanced Planning and Scheduling–centric control models address a defined technical problem: determining when jobs should be released and how they should be sequenced so that due dates are met while lead time and congestion are minimized.

In high-mix, low-volume environments, routing paths differ across jobs. Cycle times vary. Setup requirements shift. Machine availability changes due to maintenance and unplanned downtime. Due dates are specific to individual orders. Constraints fluctuate with product mix. Under these conditions, static dispatching rules are often inadequate.

Modern scheduling engines incorporate detailed inputs. They model routing structures across work centers. They incorporate cycle times, setup times, transfer times, and machine availability. They include due dates, priorities, and contractual commitments. They apply priority rules and optimization heuristics. Finite capacity constraints are embedded to prevent modeled resource overload.

Using these inputs, APS tools calculate release timing based on projected bottleneck start times, backward scheduling from due dates, or time-phased load projections. Sequencing is optimized to balance capacity and reduce lateness. When disruptions occur, the system recalculates, resequencing operations to mitigate delay.

This is a legitimate optimization function.

Release timing is computed based on modeled system state rather than intuition. Congestion is treated as a function of premature release, inefficient sequencing, or misaligned capacity assumptions. The assumption is that improved calculation improves control.

The underlying premise is that computation governs behavior.

If optimal release timing is calculated, admission will follow that calculation. If finite capacity is modeled, operational load will align with that model. If re-optimization occurs, execution will conform to the revised plan.

The next section examines whether optimizing release timing is equivalent to governing admission authority.

4.2 What TPS Governs

The Toyota Production System operates at a different architectural layer than release timing optimization. It does not begin by calculating when work should enter the system. It begins by defining the conditions under which work may enter at all.

Admission authority precedes sequencing.

Before release occurs, the system must answer prior boundary questions. What is the demonstrated and sustainable capacity of the constraint under defined normal conditions? What level of work-in-process can be supported without destabilizing flow? Under what conditions must release stop to prevent compounding instability?

These are boundary conditions, not scheduling variables.

Within TPS architecture, work is admitted only when defined operating conditions are satisfied. Standardized Work must be executable as designed. Work-in-process limits must remain within defined boundaries. Load must not exceed stabilized capacity. Downstream processes must be able to accept flow without accumulation.

If these conditions are violated, continuation is not permitted.

Admission functions as authorization rather than timing calculation.

Calculation asks when work should ideally move. Authorization determines whether work may move. Calculation recommends. Authorization enforces.

Enforcement alters system state. When WIP limits are reached, additional jobs are not released. When constraint capacity is exceeded, admission pauses. When abnormality disrupts defined normal, continuation stops until restoration occurs.

In high-mix job shops, due-date pressure and routing complexity increase discretionary admission. Without enforced authorization, release expands in response to urgency. Optimization tools may resequence intelligently, but they cannot remove overload once exposure has been admitted.

TPS governs admission before sequencing. Optimization, when applied, operates within enforced limits.

The next section examines how overload propagates once admission authority is mis-sequenced.

4.3 The Structural Question

The decisive issue is not whether Advanced Planning and Scheduling systems can compute optimal release times under modeled conditions. Modern scheduling engines process large data sets, incorporate routing variability, and recalculate sequencing logic in response to disruption. That capability is established.

The critical question is who has authority when operational pressure intensifies.

High-mix environments operate under recurring volatility. Expedite requests increase. Projected completion dates extend beyond commitments. Throughput recovery becomes a management priority. Under these conditions, discretionary decisions expand.

If admission authority is not structurally capped, additional work is released in response to urgency. Priority overrides occur. Exceptions are granted. Each action appears locally rational. Collectively, exposure increases.

As total work-in-process rises, average lead time increases nonlinearly. Utilization approaches saturation. Queue lengths extend. Variability propagates through longer and less predictable waiting times. Due-date risk escalates further, generating additional release pressure.

This is a feedback loop.

Scheduling engines respond by recalculating sequence and reprioritizing jobs. They redistribute waiting time across the admitted workload. They do not remove the workload that has already entered the system. Re-optimization shifts congestion. It does not eliminate exposure.

Lean TPS resolves the condition at the admission boundary.

When exposure exceeds defined limits, release stops. When abnormality disrupts defined normal, continuation stops. Leadership must restore stability before additional work is admitted. Behavior changes when limits are reached.

The structural question is binary.

Is exposure capped before optimization occurs, or is optimization relied upon to manage exposure after admission?

If exposure is capped first, optimization operates within stable limits.

If exposure is not capped first, optimization becomes a mechanism for redistributing instability.

This distinction determines how high-mix job shops behave under real volatility rather than under modeled equilibrium.

4.4 Why Calculation Does Not Equal Control

Calculation can improve efficiency, but it cannot alter structural flow relationships.

All production systems are governed by queuing dynamics. When arrival rate at a constrained resource exceeds effective processing capacity, queue length increases. As utilization approaches saturation, average waiting time grows nonlinearly. Variability in arrival or processing further amplifies delay. These relationships are structural properties of flow rather than managerial choices.

Sequencing logic does not eliminate congestion; it determines order within an existing queue. It does not remove the queue created by excess admission.

Advanced Planning and Scheduling systems improve sequencing relative to unmanaged dispatching. They incorporate routing complexity, finite capacity assumptions, and dynamic recalculation. Under stable conditions, they reduce avoidable inefficiencies and improve schedule adherence. However, they do not remove load once it has been admitted.

If work continues to enter the system in response to due-date pressure, cumulative exposure eventually exceeds sustainable capacity. Re-optimization redistributes waiting time across jobs, but it does not reduce total work-in-process. Lead times extend, variability compounds, and Quality risk increases as compensation replaces stability.

Control therefore requires authority to limit admission before saturation occurs.

Lean TPS embeds that authority structurally. Work-in-process is capped explicitly, release operates within bounded loops, and abnormality interrupts continuation. When boundaries are reached, system state changes immediately.

APS models may incorporate work-in-process targets or projected load limits within computational logic. Digital limits can function as structural constraints only when authority to override them is separated from the optimization layer and requires formal redefinition of operating capacity. If the same decision layer that calculates release timing can also revise exposure limits in response to urgency, governance remains conditional. Structural governance requires that limits be defined independently of optimization logic and altered only through explicit capacity redefinition rather than recalculation.

Governance-based limits define continuation permission. When exposure reaches its cap, admission stops. When defined normal is violated, production stops. Restart requires confirmation of restored conditions.

Optimization arranges admitted work, whereas control governs how much work may be admitted and whether it may proceed. Without control, optimization manages instability after exposure has accumulated. With control, optimization improves performance within stable limits and preserves Quality.

5. Heijunka and Load Discipline

5.1 The Mischaracterization

Heijunka is frequently described as a demand-smoothing technique suited to repetitive assembly environments with stable volume and predictable mix. In this framing, leveling is equated with evenly spaced finished goods production in response to relatively uniform consumption patterns.

Under this interpretation, Heijunka appears incompatible with high-mix, low-volume production. Engineer-to-order configurations, routing variability, sporadic demand, and due-date-driven commitments do not resemble repetitive final assembly. If demand itself is not level, leveling appears irrelevant.

This interpretation rests on a category error. It equates Heijunka with smooth demand rather than with disciplined capacity translation.

Heijunka is not primarily a demand-smoothing device. It is a load-disciplining mechanism. Its function is to regulate the rate and pattern of work admission relative to demonstrated system capacity. It governs how external demand is translated into internal workload. It does not eliminate volatility; it prevents volatility from being transmitted directly into constrained resources without structural control.

All production systems face variability. The structural issue is whether internal workload is permitted to mirror that variability without constraint, or whether admission is structured to protect stability. Heijunka defines that structure by pacing and sequencing workload relative to capacity boundaries.

When interpreted as schedule formatting for repetitive output, Heijunka appears limited to assembly contexts. When understood as a mechanism that stabilizes workload relative to capacity, its relevance extends to any system in which uncontrolled admission leads to overload.

High-mix job shops do not eliminate the need for load discipline. Routing diversity and demand irregularity increase sensitivity to queuing amplification. The absence of repetition does not eliminate capacity limits; it increases the consequences of exceeding them.

The next section examines how load discipline functions in non-repetitive environments without relying on finished-goods repetition.

5.2 Heijunka Defined Structurally

Heijunka is a load-disciplining mechanism. It does not eliminate demand variability; it regulates how that variability is translated into internal workload relative to demonstrated capacity. Its purpose is to prevent uncontrolled overload by structuring admission and sequencing in relation to sustainable throughput.

Structurally, Heijunka stabilizes four interconnected conditions. It stabilizes admission pacing so that work enters at a rate aligned with sustainable capacity rather than fluctuating in response to urgency. It stabilizes constraint loading so that bottleneck resources are not subjected to abrupt surges followed by idle gaps. It stabilizes product mix sequencing so that variety does not translate into chaotic processing patterns that increase setup loss and variability. It stabilizes work-in-process exposure so that accumulation between operations remains bounded and visible.

In repetitive environments, this stabilization appears as mixed-model sequencing at final assembly, where relative demand stability allows visible leveling at the end of the value stream. In high-mix, low-volume environments, the structural logic remains intact but the point of application shifts. Leveling occurs at the release boundary and at constrained resources rather than at finished goods output. Admission pacing becomes the primary control lever. The visible sequencing board may disappear, but the underlying capacity discipline remains.

The governing question is constant across contexts: how much load can the system absorb without destabilizing Standardized Work and degrading Quality. When admission exceeds sustainable capacity, variability compounds, lead times extend, compensation behaviors emerge, and stability erodes.

Heijunka addresses this condition by pacing release relative to constraint capacity and preventing internal workload from directly mirroring external volatility. In high-mix job shops, this may take the form of controlled release intervals, explicit caps on active jobs at bottleneck centers, predefined daily load quotas aligned with demonstrated throughput, or sequencing patterns that balance setup frequency. The mechanism differs in form from automotive assembly, but the structural purpose is identical.

Heijunka is therefore not assembly-specific. It is a capacity discipline mechanism that governs how variability enters the system.

The next section examines how explicit exposure boundaries prevent work-in-process expansion under due-date pressure.

5.3 High-Mix, Low-Volume Application

In high-mix job shops, variability is inherent. Orders arrive irregularly. Configurations differ. Routing paths vary. Setup requirements shift. Due dates are negotiated individually. These conditions increase volatility at the release boundary.

Volatility does not eliminate the need for load discipline. It increases it.

Leveling in this context does not mean smoothing finished goods output. It means regulating how much work is active inside the system relative to demonstrated constraint capacity.

Practically, this requires limiting concurrent active jobs at bottleneck resources. Release must reflect sustainable throughput rather than urgency. When multiple orders escalate simultaneously, admission cannot expand proportionally without destabilizing flow.

Without pacing, oscillation emerges.

Work is released in response to urgency. Multiple jobs enter concurrently. Bottlenecks saturate. Queue length increases. Waiting time extends nonlinearly. Setup frequency becomes irregular. Standardized Work is compressed or bypassed. Quality risk increases under schedule pressure.

After congestion peaks, starvation may follow as flow becomes uneven. The system alternates between overload and idle gaps. Variability compounds internally.

This oscillation is a structural consequence of unconstrained admission.

Heijunka interrupts this pattern by filtering demand through capacity boundaries. Admission occurs at a controlled rhythm aligned with sustainable throughput. When limits are reached, additional work remains outside the system rather than compounding instability inside it.

Volatility remains visible. It does not propagate unchecked.

In high-mix environments, Heijunka is not schedule formatting. It is admission discipline. It prevents internal workload from directly mirroring external urgency.

The next section examines how explicit work-in-process boundaries reinforce this discipline at the exposure level.

6. Kanban vs Controlled Push

6.1 Kanban’s Structural Role

Kanban is often described as an inventory management tool. In this narrow framing, it appears to be a visual signaling device used to trigger replenishment. This description understates its architectural role.

Within Lean TPS, Kanban limits exposure.

A Kanban loop defines the maximum work-in-process permitted between two linked processes. It ties production authorization directly to downstream consumption. It establishes a visible and finite boundary between upstream and downstream operations.

When the authorized number of Kanban signals is deployed, no additional production is permitted. Upstream processes stop regardless of local idle capacity or due-date pressure. This is not advisory scheduling guidance. It is enforced admission control.

Under load, the distinction is decisive.

Kanban does not forecast demand or compute optimal plant-wide sequencing. It does not model due-date commitments across complex routing structures. Its function is narrower and more fundamental. It prevents overproduction and uncontrolled accumulation of work between processes.

Overproduction includes producing ahead of need in a way that increases internal exposure beyond sustainable limits. When upstream output continues without downstream authorization, instability accumulates. Inventory masks imbalance. Lead times extend. Variability propagates.

Kanban interrupts this pattern by capping exposure at defined boundaries.

In high-mix environments, the mechanism may not take the form of physical cards at every interface. It may appear as explicit WIP caps, digital authorization signals, constrained job slots at bottleneck resources, or visible release quotas. The physical implementation varies. The architectural function does not.

Kanban defines how much work may exist within a boundary and prohibits expansion beyond that limit without deliberate structural change.

It is therefore not primarily about inventory accounting. It is about preventing exposure from exceeding capacity before congestion forms.

The next section examines how controlled push models address release and where the architectural difference emerges.

6.2 Controlled Push in Advanced Planning and Scheduling Models

Controlled push models pursue outcomes similar to Kanban-based control through computational release timing rather than signal-based admission caps. Jobs are released when algorithms determine that projected load, routing availability, and due-date requirements permit entry without excessive congestion. Work-in-process is regulated through calculated timing rather than through fixed authorization limits.

This approach is presented as more precise. Advanced Planning and Scheduling systems evaluate routing complexity, machine status, setup times, and delivery commitments simultaneously. Release timing is recalculated dynamically as conditions change. Instead of relying on a predefined number of authorization signals, the model adjusts admission continuously.

Under stable conditions, this can function effectively. When variability is moderate and modeled constraints are respected operationally, algorithmic release timing can moderate congestion and improve due-date performance relative to uncontrolled push systems.

The architectural question concerns enforcement.

What prevents admission when algorithmic recommendations conflict with delivery pressure?

In high-mix job shops, due-date escalation is routine. When an order becomes critical, pressure to release increases. If the model recommends delay due to projected overload, management faces a decision. If the constraint is recalculated to accommodate the expedite, the boundary shifts. If this occurs repeatedly, recalculation becomes the mechanism of expansion.

If WIP limits exist only as modeled parameters, they remain subject to override. Each exception appears justified. Cumulatively, exposure increases. Queue length expands. Lead time extends.

Controlled push regulates flow through computation. Kanban regulates flow through enforced authorization.

The difference is not algorithm quality. It is authority location. Adaptive recalculation becomes governance only if modeled constraints are not modifiable by release pressure. When recalculation modifies sequence within fixed exposure boundaries, stability is preserved. When recalculation modifies the boundaries themselves, exposure expands under urgency. The distinction is whether optimization operates inside predefined limits or participates in redefining those limits during escalation.

In a Kanban-based architecture, exceeding authorized limits requires structural change. In a controlled push architecture, exceeding projected limits may occur through recalculation or discretionary override. The model adjusts; the admitted load remains.

When systems operate near capacity and variability increases, this distinction determines whether instability is prevented at the boundary or managed after accumulation.

6.3 The Structural Difference

The difference between Kanban-based exposure control and controlled push release logic is not technical sophistication. It is authority location.

Kanban establishes a visible and finite limit on work-in-process. Whether implemented through physical cards, digital tokens, container limits, or slot-based controls, the maximum authorized exposure is explicit. When that limit is reached, upstream production stops. Exceeding the limit is not an optimization choice. It is a rule violation.

Because the boundary is visible and finite, exposure reaching its cap produces an immediate operational signal. The limit exists in the operating system, not only in a computational model. Breaking it requires deliberate and observable action.

Controlled push depends on adherence to calculated release logic. Algorithms determine when work should enter based on projected load, routing complexity, and due dates. Exposure remains bounded only if model assumptions and recommendations are followed operationally.

Under pressure, model revision becomes the mechanism of boundary expansion.

When due-date risk escalates, priorities are adjusted. Capacity assumptions may be revised. Release timing is recalculated. What was previously considered overload may be redefined as acceptable under urgent conditions. The boundary shifts with each recalculation.

In this structure, authority remains conditional. The algorithm recommends. Management retains discretion.

In a governance-based Kanban architecture, authority is embedded. Admission does not expand automatically under urgency. Increasing capacity requires structural action such as additional shifts, investment, or explicit revision of WIP limits. The boundary does not drift implicitly.

The distinction is binary.

Kanban embeds exposure limits in the operating system.

Controlled push embeds limits in computational logic subject to override.

When authority is conditional, exposure expands incrementally under pressure.

When authority is embedded, overload is prevented at the boundary.

This difference determines whether instability is prevented structurally or redistributed computationally after admission.

The next section examines the physical consequences of exposure expansion through queuing behavior in constrained machining environments.

7. Scheduling Cannot Repeal Physics

7.1 Queuing Fundamentals

All production systems are governed by queuing dynamics. These relationships apply regardless of product mix, routing diversity, or scheduling sophistication. They are structural properties of flow.

When arrival rate at a constrained resource approaches effective processing capacity, queue length increases. As utilization nears saturation, average waiting time rises nonlinearly. When variability exists in arrival or processing, delay amplification intensifies. Small load increases near capacity produce disproportionate growth in waiting time.

This behavior is observable without formal modeling. When admission to a bottleneck machining center exceeds sustainable throughput, pallets accumulate. When multiple large components enter heat treat simultaneously, waiting becomes unpredictable. When grinding capacity saturates, staged jobs expand even if upstream flow appears balanced.

Finite scheduling improves order within these constraints. It can sequence execution more intelligently, group setups, and reduce avoidable idle gaps. It can prioritize urgent work more effectively than manual dispatching.

It cannot alter the relationship between arrival rate and capacity.

If admitted workload exceeds what the constraint can process within the available horizon, waiting accumulates. Sequencing redistributes that waiting among jobs. It does not eliminate it.

This boundary is determined by effective capacity and variability, not computational sophistication.

The following section examines how resequencing reallocates congestion rather than removing structural overload.

7.2 Limits of Resequencing

Dynamic resequencing is presented as a corrective mechanism within Advanced Planning and Scheduling systems. When disruptions occur or priorities shift, processing order is recalculated and jobs are reprioritized to reduce lateness and protect critical commitments.

Resequencing can improve local performance by reducing tardiness for selected jobs, grouping similar setups, and avoiding idle time when alternative routings exist. These gains occur within the work already admitted to the system.

Resequencing does not reduce total admitted load.

When workload at a constrained resource exceeds effective capacity, a queue forms representing accumulated exposure. Resequencing determines execution order within that queue, but it does not reduce its volume.

Expediting makes this mechanism explicit. When a critical job is advanced, its waiting time decreases while the waiting time of displaced jobs increases correspondingly. Total congestion remains unchanged; delay is redistributed rather than eliminated.

Optimization therefore improves order within congestion but does not remove congestion created by excess admission.

If additional work continues to enter an already overloaded constraint, resequencing manages delay distribution without restoring structural stability. Lead times remain extended, variability persists, and frequent priority shifts erode operational stability.

In high-mix environments with intersecting routings, resequencing at one resource can create imbalance at another. Each recalculation resolves a local conflict while potentially generating new instability elsewhere. The system remains reactive to accumulated load.

This limitation is structural. Congestion originates from excess admitted workload relative to effective capacity. Once admitted, load can be reduced only by lowering arrival rate or increasing sustainable capacity. Sequence rearrangement alone cannot restore stability or protect Quality.

The following section examines how governance-based admission control prevents congestion at the boundary rather than managing it after formation.

7.3 Governance-Based Prevention

Lean TPS addresses congestion at its source. It constrains arrival rate before instability compounds.

Prevention is achieved through enforced exposure limits.

Admission pacing is defined relative to demonstrated constraint capacity. Work does not enter continuously in response to urgency. Release follows a controlled rhythm aligned with sustainable throughput. Variability is filtered at the boundary rather than transmitted directly to constrained resources.

Explicit work-in-process caps define maximum allowable exposure. These limits are visible and finite. When the cap is reached, additional release stops. Excess demand remains outside the system rather than accumulating invisibly inside it.

Stop authority is embedded. When conditions deviate from defined normal, continuation is interrupted. The system does not rely on adaptation to absorb overload. Deviation changes operational state immediately.

Leadership obligation is structural. When limits are reached or abnormality occurs, response is required before flow resumes. Responsibility remains within daily management until stability is restored.

These mechanisms prevent overload rather than manage it after admission.

Optimization remains valuable within these boundaries. Sequencing improvements, setup reduction, routing refinement, and capacity enhancement operate more effectively when exposure is controlled. Optimization enhances stability. It does not substitute for enforcement.

The decisive question is whether architecture prevents overload before it compounds, or attempts to calculate around overload after it has been admitted.

When exposure is constrained at the boundary, volatility is surfaced early and Quality is protected. When exposure expands and recalculation manages congestion, instability propagates and Quality degrades under pressure.

The next section examines how this distinction manifests in Quality performance under sustained volatility.

8. Architectural Stack Comparison

8.1 Two Different System Stacks

The disagreement between optimization-first job shop models and governance-first TPS architecture is not about individual tools. It concerns system layering. The sequence in which authority is established determines how the system behaves under pressure.

Optimization-First APS-Centric Stack

A typical optimization-first architecture follows this order:

• Forecast or Order Intake

• Due-Date Assignment

• APS Release Calculation

• Finite Capacity Scheduling

• Dynamic Resequencing

• Expediting Logic

• Post-Event Problem Solving

In this stack, admission is determined through modeled capacity, due dates, and projected system state. Release timing is algorithmic. Exposure is monitored through dashboards and load indicators. When congestion develops, resequencing adjusts execution order. When commitments are threatened, expediting reallocates priority. Persistent performance gaps trigger post-event analysis and corrective action.

Stability in this architecture is restored reactively. Overload is not structurally prevented at the boundary; it is managed after work has already been admitted. The effectiveness of this approach depends on whether modeled constraints remain enforceable boundaries or become adjustable parameters under pressure.

Authority resides primarily in computational logic supplemented by managerial override. Modeled constraints can be revised, WIP targets recalculated, and priority rules adjusted. Under escalation, recalculation shifts boundaries. The architecture assumes that sufficiently strong computation can regulate behavior dynamically.

Governance-First TPS Stack

The governance-first architecture reverses the sequence:

• Defined Standardized Work

• Demonstrated Capacity Boundaries

• Explicit WIP Caps

• Admission Authority Rules

• Signal-Based Release

• Stop Logic for Abnormality

• Leadership Response Obligation

• Problem Solving After Stabilization

In this stack, work is defined before it is scheduled. Standardized Work establishes the sequence, timing, and in-process conditions required to produce Quality consistently. Demonstrated capacity boundaries define sustainable throughput under normal operation and serve as verified limits rather than theoretical maxima.

Explicit WIP caps limit exposure structurally. Admission authority rules define who may release work and under what conditions. Release is not merely a calculated recommendation; it is an authorization event governed by enforceable limits.

Signal-based mechanisms align upstream production with downstream capacity availability. Stop logic interrupts continuation when defined normal is violated. Leadership response is required before flow resumes. Problem solving occurs after stabilization rather than during uncontrolled execution.

In this architecture, admission is constrained before scheduling. Exposure is capped structurally, and continuation depends on compliance with defined conditions. Optimization operates within enforced limits rather than defining them.

8.2 The Decisive Structural Difference

The decisive difference between the two stacks lies in how stability is assumed to be achieved.

The APS-centric stack assumes that sufficiently strong computation can regulate system behavior dynamically. Variability is modeled, release timing is calculated, and sequencing is optimized. When disruption occurs, the system recalculates. Stability is treated as an outcome derived from continuous analytical adjustment.

In this architecture, structural constraint functions as the primary stabilizer. Governance precedes calculation, and analytical intelligence operates within limits defined independently of the optimization layer. Those limits cannot be altered by recalculation or priority revision. Any change to exposure boundaries requires explicit redefinition of sustainable capacity rather than adjustment of scheduling parameters.

The TPS stack assumes a different boundary condition. It holds that if admission is not structurally constrained, instability will emerge regardless of computational sophistication. Variability cannot be neutralized through sequencing alone. If workload exceeds sustainable capacity, queues grow. If work-in-process is uncapped, exposure expands. If continuation authority is discretionary, deviation propagates under pressure.

In this architecture, structural constraint functions as the primary stabilizer. Governance precedes calculation, and analytical intelligence operates within enforced limits that cannot be exceeded without explicit violation of defined conditions.

The distinction therefore concerns the locus of control. One model manages variability after it enters the system, whereas the other prevents variability from compounding at the boundary. Both architectures may employ scheduling tools and capacity models, but they differ in which layer governs exposure.

In the optimization-first stack, scheduling logic determines admission and sequencing. In the governance-first stack, structural limits define the conditions within which scheduling may occur. This ordering determines system behavior under sustained volatility and establishes whether stability and Quality are protected structurally or managed reactively.

9. Material Handling as Structural Evidence

9.1 Why Toyota Avoided Centralized Scheduling Dependence

In large-scale machining and fabrication environments, including high-mix component production, Toyota did not construct production control around centralized computational release authority. System stability did not depend on a single scheduling engine regulating congestion across the plant. Upstream planning functions existed, but execution control at the production boundary was governed through structural exposure limits rather than centralized computational authority.

Control was embedded in physical flow design. Material movement was structured through decentralized pull loops that defined explicit upstream and downstream boundaries. Production authorization was triggered by actual consumption rather than projected load. Lot sizes were deliberately constrained to prevent accumulation, and where feasible, processes were arranged in flow-oriented configurations to shorten feedback loops and expose instability rapidly.

Exposure limits were physical rather than abstract. Floor space allocation, container counts, rack quantities, lane design, and material staging areas were configured to define maximum allowable work-in-process. When those limits were reached, upstream production stopped. The boundary was visible and finite. Exceeding it required explicit intervention rather than recalculation.

Material movement cadence reinforced this structure. Tugger routes and automated guided vehicle systems operated on defined intervals tied to consumption signals and replenishment cycles. Movement frequency was disciplined and observable. Deviation from cadence signaled abnormality rather than triggering immediate computational reprioritization.

Material handling was therefore designed to enforce bounded exposure and immediate visibility of overload. It was not constructed primarily to maximize routing flexibility or algorithmic sequencing efficiency. Its function was to prevent silent accumulation behind optimized schedules.

This design constitutes structural evidence of governance-first architecture. If centralized Advanced Planning and Scheduling release logic were sufficient to prevent instability, decentralized signal-based flow control would be unnecessary. A scheduling engine could regulate admission computationally across the plant. Toyota did not adopt that dependency.

Instead, constraint was embedded into physical design so that exposure could not expand invisibly through recalculation. This reflects the governing assumption of the TPS stack: structural limits precede optimization.

9.2 High-Mix Machining Environments

High-mix machining environments introduce routing variability, setup diversity, and demand fluctuation. Part numbers may number in the hundreds or thousands, operation times differ significantly, and engineer-to-order work may share equipment with repeat production. These conditions differ materially from repetitive final assembly.

Toyota did not respond to this complexity by centralizing authority in computational release logic. Instead, it simplified structure where possible and constrained exposure where simplification was not feasible.

Machine groupings were rationalized so that routing flexibility was not left unrestricted across dispersed equipment. Similar operations were clustered to reduce travel distance, shorten feedback loops, and expose congestion earlier in the process. Batch sizes were reduced systematically, and large lots that masked instability were challenged. Smaller lots shortened lead times, reduced queue length per job, and surfaced variability more quickly. Lot reduction therefore functioned as an exposure control mechanism rather than solely as an efficiency improvement.

Changeover time was deliberately reduced to increase effective capacity and make smaller lot sizes economically sustainable. This diminished the structural incentive to accumulate large queues ahead of constrained machines.

Work-in-process was visibly capped through physical lanes, container limits, and defined staging boundaries that established maximum allowable accumulation. When those limits were reached, upstream release stopped. The boundary was structural rather than advisory.

The objective in these environments was not to eliminate complexity, which is inherent in high-mix production, but to prevent complexity from concealing overload. When routing networks are complex and demand fluctuates, uncontrolled admission produces distributed accumulation across multiple nodes. Jobs wait in parallel locations, priority conflicts multiply, expediting becomes systemic, and instability propagates through the network.

Structural caps interrupt this diffusion by constraining admission at defined boundaries. Because high variety increases sensitivity to queuing amplification, it increases rather than reduces the necessity of disciplined admission control and explicit work-in-process limits. Governance-first architecture therefore tightens structural boundaries under complexity instead of relaxing them in favor of dynamic recalculation.

10. Automation and Structural Amplification

10.1 Automation Amplifies Structural Weakness

As automation levels increase, computational capability and execution speed expand. Real-time scheduling becomes feasible, routing decisions can be recalculated continuously, and autonomous systems can reassign tasks and adjust sequences without direct human intervention.

Throughput potential increases accordingly, but so does the rate at which instability can propagate. Although automation can reduce localized variability and improve execution precision, it cannot eliminate the consequences of unconstrained admission at the system level. When execution speed rises while admission authority remains structurally unconstrained, overload accumulates more rapidly. When routing adapts dynamically without exposure caps, congestion spreads more efficiently across interconnected resources. When abnormal conditions are detected but continuation authority is discretionary, automated systems replicate deviation at scale.

Automation therefore does not remove structural limits; it intensifies the effects of violating them.

A highly automated cell operating under misaligned conditions produces deviation at higher velocity than a manual system. Similarly, a real-time scheduling engine that redistributes excess load may reduce localized idle time while increasing total system exposure. Variability is not eliminated under these conditions; it is propagated more efficiently.

Within governance-first architecture, automation operates inside enforced boundaries. Admission pacing remains defined, work-in-process caps remain visible, stop logic remains non-negotiable, and leadership response remains structurally required. Under these conditions, increased computational capability enhances optimization within stable limits.

In optimization-first architecture without structural caps, automation accelerates instability. Higher-speed processing magnifies the effects of unconstrained admission, and continuous recalculation becomes a mechanism for adaptive overload management rather than structural prevention.

As automation increases within mixed-model manufacturing environments, the importance of sequencing authority before optimization intensifies. Enhanced capability amplifies structural weakness when governance is not enforced, whereas it strengthens performance when structural limits are embedded.

10.2 Why Stop Logic Becomes More Critical

As automation increases, execution speed rises and distributed autonomy expands. Detection windows shorten and the rate of error propagation accelerates. Under these conditions, stop logic becomes more critical rather than less.

Three governance conditions must therefore be structurally enforced. Detection must trigger interruption, because signal recognition without a change in system state does not protect Quality. When process parameters, sequencing, or material conditions deviate from defined normal, continuation authority must change immediately.

Interruption must halt continuation. Production cannot proceed while abnormality is analyzed in parallel, because execution under deviation allows instability to scale. Restart must also require verification. Resumption cannot be automatic; confirmation that defined normal has been restored must precede continuation.

These requirements are governance conditions rather than optimization features.

Advanced Planning and Scheduling systems can reroute jobs, resequence operations, and redistribute load dynamically. They do not inherently enforce non-negotiable stop authority unless the surrounding architecture embeds it.

Without enforced stop logic, automated systems adapt around deviation. Routing engines may bypass constrained resources without resolving root cause. Robotic cells may continue processing within drifting tolerance bands. Scheduling engines may recalculate completion dates without correcting underlying instability. Adaptive optimization can mask abnormality, but it does not eliminate it.

As autonomy increases, the cost of weak governance increases proportionally. Higher execution speed multiplies the impact of deviation, distributed intelligence diffuses responsibility, and computational adjustment can obscure structural failure.

Governance-first architecture prevents this drift by embedding stop authority at the execution boundary. Detection alters continuation permission, interruption is mandatory, restart requires verification, and leadership response remains structurally obligated. In highly automated environments, stop logic becomes a primary safeguard for Quality, because computational sophistication cannot substitute for enforced authority. Instead, increased intelligence heightens the necessity that structural governance precede optimization.

11. Final Structural Boundary

11.1 What This Paper Does Not Claim

This paper does not argue that Advanced Planning and Scheduling tools lack value. Modern computational scheduling materially improves sequencing across complex routings, models finite capacity interactions, and enhances due-date performance within admitted work.

It does not assert that high-mix, low-volume environments should mechanically imitate repetitive assembly lines. Engineer-to-order machining, aerospace component production, mold programs, and specialized fabrication require contextual design aligned to their operational realities.

It does not dismiss analytical modeling, finite capacity logic, or dynamic resequencing. These capabilities improve system performance when applied within defined structural limits.

It does not advocate replication of Toyota’s specific physical layouts, cell geometries, or material handling configurations. Architectural principles do not require identical physical form.

The claim advanced in this paper is narrower and structural. It concerns sequencing of authority rather than superiority of tools. The central issue is whether governance precedes optimization, and whether admission, exposure, and continuation are structurally constrained before computational intelligence is applied. Tool capability is not the determining factor; architectural ordering is.

11.2 The Boundary

The boundary established in this paper is structural rather than methodological.

Optimization does not replace governance. Analytical calculation can improve sequencing and efficiency, but it does not define the conditions under which work is permitted to proceed. Time-based release calculation is not equivalent to admission authority; computing an optimal start time does not structurally limit how much work may enter the system under load.

Resequencing does not replace exposure caps. Rearranging priority within a queue does not eliminate congestion when admitted workload exceeds effective capacity. Similarly, expediting logic does not replace stop logic; accelerating selected orders does not enforce non-negotiable interruption when abnormal conditions appear.

If admission is unconstrained, scheduling sophistication determines only how instability is distributed. Congestion may be prioritized and due dates may be triaged, but variability will continue to compound as exposure accumulates.

If admission is structurally constrained, optimization improves performance without destabilizing the system. Sequencing enhances flow within defined limits, capacity gains are realized without silent overload, and variability is surfaced rather than absorbed.

Governance therefore defines continuation permission, while optimization defines sequencing within admitted work. When governance precedes optimization, stability and Quality are protected structurally. When optimization precedes governance, stability depends on continuous recalculation under pressure.

This ordering defines the architectural boundary.

11.3 The Core Question for APS Advocates

The decisive question can be stated precisely:

At what architectural layer is override authority located, and can release occur without satisfying independently defined exposure boundaries?

Every production system encounters escalation. Customer urgency increases, revenue risk becomes visible, and leadership demands schedule recovery. Under those conditions, theoretical discipline is tested. The relevant issue is not whether release timing can be computed accurately, but whether structural limits remain fixed when pressure rises.

If exposure limits are defined within the same optimization layer that calculates release timing, then those limits can be revised through parameter adjustment, priority recalibration, or model recalculation. In this structure, governance is conditional. Stability depends on analytical discipline and managerial restraint.

If exposure limits are defined independently of the optimization layer and cannot be altered without formal redefinition of sustainable capacity, governance is structural. Release cannot occur unless boundary conditions are satisfied. Changing those boundaries requires explicit capacity change rather than recalculation.

This distinction determines behavior under volatility.

In governance-first architecture, exposure has a defined maximum that exists independently of sequencing logic. Admission authority is separated from optimization. Stop logic alters system state when conditions are violated. Leadership response is required before continuation resumes. Urgency does not modify boundaries; it activates enforcement.

In optimization-first architecture, release logic, work-in-process targets, and priority rules can be recalculated within the same decision layer that sequences work. Constraints adapt as pressure intensifies. Stability depends on continuous analytical adjustment rather than fixed structural limits.

The Toyota Production System rests on a specific architectural assumption: analytical capability does not substitute for independently defined and enforced boundaries. Without separation between optimization logic and exposure authority, overload is managed rather than prevented. That separation defines the structural divide between governance and optimization.

Continuity With the Earlier Articles in This Series

This article extends a consistent line of inquiry developed across LeanTPS.ca: how governance was progressively separated from system behavior as the Toyota Production System was translated into portable improvement frameworks.

Earlier articles examine this structural separation at different layers of the enterprise.

Six Sigma (post-1990s) and Lean Six Sigma (post-2010s): How Quality Governance Was Replaced

Examines how certification systems, project structures, and belt hierarchies displaced leadership ownership of Quality, producing technically capable organizations without durable control.

https://leantps.ca/six-sigma-lean-six-sigma-quality-governance/

Kaizen (post-1980s): How Governance Was Removed from the Toyota Production System

Traces how Kaizen became portable by shedding Jishuken, escalation, and leadership obligation, allowing improvement activity to persist while system governance eroded.

https://leantps.ca/kaizen-post-1980s-how-governance-was-removed-from-the-toyota-production-system/

Jishuken: Leadership Governance Through Direct System Engagement

Examines how Toyota preserved Quality by obligating leaders to participate directly in system diagnosis and escalation, and why the absence of Jishuken disconnects improvement from responsibility.

https://leantps.ca/jishuken/

Why Dashboards and Scorecards Cannot Replace Andon in Lean TPS

Addresses governance failure at the operational layer. When visibility tools replace stop authority and leadership obligation, Quality becomes informational rather than governed.

https://leantps.ca/dashboards-scorecards-cannot-replace-andon-lean-tps/

Lean TPS Disruptive SWOT: Governing Strategic Direction Through Quality and Leadership Obligation

Examines governance at the strategic layer. When direction is not bound to explicit conditions, ownership, cadence, and response, organizations substitute persuasion for leadership obligation.

https://leantps.ca/lean-tps-disruptive-swot/

Together, these articles describe a single structural failure mode expressed at different levels of the enterprise. When governance is removed, visibility increases while control weakens. Lean TPS restores Quality by governing conditions before work begins, not by reporting outcomes after loss occurs.